Looming Changes in Concurrent Programming

with Java Champion Cay Horstmann

TOPICS

We will discuss the following points:

- Fibers, erm, virtual threads

- Blocking is cheap

- Can have millions (=>blocking no more needed)

- Charming API --just like j.l.Thread, j.u.concurrent

- No more async

- Structured concurrency -- no more goto with thread

- Make concurrency easy again?

- How should we teach this stuff?

- awaitTermination

Let's review concurrency history in Java.

CONCURRENCY ON THE JAVA PLATFORM

*1995: Java language has thread support

*1997: Java web server (predecessor of Tomcat) runs each web request in a new thread

- =>Amazing in 1997: thousand of concurrent requests

*2004: Java 5 java.util.concurrent

- =>ReentrantLock, ConcurrentHashMap, Executor, Future

*2008: Java 8

- =>Parallel streams, CompletableFuture

Then we get to Loom.

LOOM

*Thread are expensive

- =>But what can you do when one blocks?

*Asynchronous programming

- =>Callback hell, Futures, Async/await

*Loom: What if blocking wasn't expensive?

- =>Millions of concurrent fibers

- =>Each thread runs many fibers

- =>Creating, switching between fibers cheap

- =>Blocking is virtually free

- =>VM, API park, unpark blocking fibers

Is Loom the key to make concurrency easy again?

MAKE CONCURRENCY EASY AGAIN

*Not so fast

*More than one reason for concurrency

*User interfaces: UI components not threadsafe

- =>Single UI thread serializes operations

- =>Fibers won't help

- =>Keep using AsyncTaskSwingWorker

*What about parallel streams -- the previous promise to "make concurrency easy again?"

- =>Works great for non-blocking workloads...

- =>...on splittable data structures

- =>Nevertheless parallel streams is not a universal solution

*Fibers don't add value for computationally-intensive tasks (like cryptography)

*Sweet spot:

- =>Many more tasks than threads...

- =>... that mostly block

So it is all about virtual threads...

VIRTUAL THREADS

*Tasks run in fiber, now called "virtual thread"

*Virtual threads mapped onto "carrier" threads

*When a virtual thread blocks, it is "parked" and the carrier thread executes another virtual thread

*Didn't we have all that with "green threads" way back in Java 1.0?

- =>When a green thread blocked, it blocked the carrier thread

*Naming is hard

- =>Originally, "lightweight" threads were called "fibers" --hence "loom"

- =>Fiber API converged to thread API

- =>"new" or "lightweight" not so good when something newer or lighter weight comes along

*"Virtual" supposed to evoke virtual memory that is mapped to actual RAM

- =>Do not think "virtual function"

But how do we code these virtual threads?

CONSTRUCTING VIRTUAL THREADS

*Quick one-off (for demos etc.)

*Builder API:

*But why are you constructing your own thread? Use an executor

You can also use ExecutorService.

USING AN EXECUTOR SERVICE

*Submit runnables and callables in the usual way:

*Can customize with thread factory:

*Don't mix with cached thread pool!

Okay! We have the syntax, now let's try it!

KICK THE TIRES

*Download binaries from http://jdk.java.net/loom/

*Run a million fibers:

*Try it with threads

- =>On my laptop, out of memory after about 10 000 threads

Great, it run the million threads. Checked! Now, let's inspect the project state.

STATE OF THE PROJECT

*API implementations are being made fiber friendly

- =>Thread.sleep

- =>j.u.c locks

- =>NIO, sockets

- =>JSSE implementation of TLS

*Reimplementations already in JDK 11,12,13

*Can't yet block on monitors (ReentrantLock is ok)

*Working on debugger, monitoring support

*Lots of "instabilities"

*Performance nowhere near where it needs to be

*Need feedback on API

*Need help with testing

The other thing Loom offers, is structured concurrency.

STRUCTURE CONCURRENCY

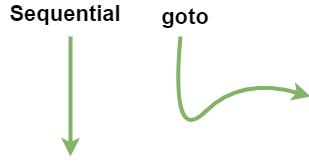

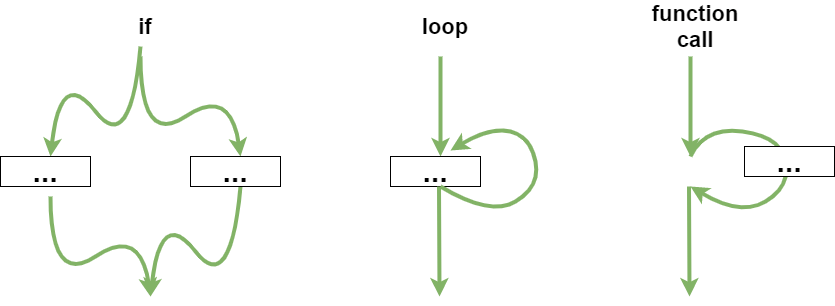

*Nathaniel Smith: "Start and forget" is like goto

*1960s: Structured programming replaces goto with branches, loops, functions

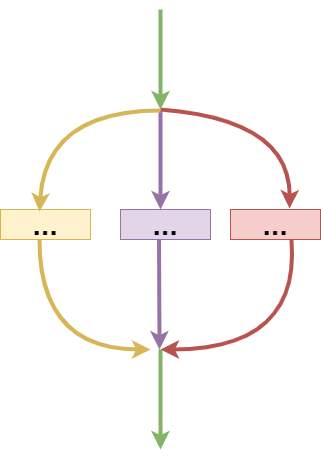

*Structured concurrency: Should do the same with concurrent tasks

- =>It waits for tasks to complete together.

Concretely, how do you structure concurrency?

STRUCTURING WITH EXECUTOR

*exec.close() blocks until all tasks are done

- =>Remember: blocking is cheap

*Executor is autocloseable:

*With callables, use invokeAny, invokeAll

*To cancel overdue tasks, use a deadline:

So tasks can get cancelled with virtual threads, but how?

CANCELLATION

*Cooperative cancellation

*Has never been fun in Java:

*Poor match for thread pools

*Inconsistently used

- =>ExecutorService.invokeAny cancels remaining tasks

- =>CompletableFutire.anyOf doesn't

*Loom flirted with a different cancellation model

*Now back to interruption

*Interrupting blocked virtual thread is cheap (no cost)

*ExecutorService.shutdownNow, expired deadline interrupts remaining tasks

Let's discuss about Thread Locals situation.

THREAD LOCALS #underConstruction #maybeChange

*Thread.Builder methods noThreadLocals(), noInheritableThreadLocals()

*Exploratory work on "lightweight thread locals"

*Bound to a scope (such as an executor), not the lifetime of the thread

*Binding is immutable

*This worked in build 16-loom+9-316 (but not in build 17-loom+2-42)

*Why no tie more closely to executor? Other arrangements are plausible:

Let's see a concrete example of the current API issues kind with Heinz Kabutz'example.

HEINZ KABUTZ'EXAMPLE

*At JCrete, Heinz Kabutz gave a puzzler with a program that loaded thousand of Dilbert cartoon images, one per day

*For each image,

- =>Load page such as https://dilbert.com/strip/2011-06-05

- =>Find image URL in strip

- =>Load image from that URL

- =>Display or save image

*It was a mess of completable futures, somewhat like:

That mumbo jumbo of completableFuture is really daunting for debugging/tracing. Can it be rewritten in a right way with fibers? Let's see.

GOOD USE OF FIBERS?

*With virtual threads:

*No callback code in load

- =>Blocking call to semaphore for throttling request

- =>Blocking read of the page

- =>blocking read of the image

*No win to use fibers -- gating factor is number of concurrent request to Wikimedia

*Actually, there is a win:

- =>No-guilt call to semaphore for throttling concurrent requests

- =>Explicitly tuning instead of through the size of the thread pool

*The article by Jetty veterans is dubious

- =>If the millions of virtual threads consume nontrivial resources, those will be the gating factor

- =>Mixed approach: kernel threads for the parts that need tight control, virtual threads for code that shouldn't have to struggle with async

All this considerations about concurrency bring to the question: how do we teach concurrency?

HOW DO WE TEACH CONCURRENCY

*Case study: The Java Tutorials Lesson: Concurrency

*Thread.start, Thread.sleep

*Thread.interrupted, InterruptedException

*Thread.join

*Race conditions

*synchronized methods, synchronized blocks

*volatile

*Deadlocks, starvation, livelocks

*wait, notifyAll

*This is so wrong

So if that way of teaching is wrong, what approach to adopt?

TASKS, NOT THREADS

*Let's rethink concurrency

*A Runnable is a task (presumably with a side effect)

*A Callable<T> delivers a result (hopefully without side effects)

*An executor executes tasks:

*With callables, execution yields a future:

*With Loom, we don't care that result.get() blocks.

And rather to speak of concurrency, let's speak of task coordination.

TASK COORDINATION

*Typically, a task decomposed into subtasks

*Is it worth executing them concurrently?

- =>To keep cores busy

- =>If they spend a lot of time blocking

*"Cores busy" is messy because it depends on the number of cores

- =>Parallel streams

- =>Recursive tasks (fork-join)

*Loom works well for blocking tasks

- =>ExecutorService.invokeAll/invokeAny for composing results

- =>Async should be left to experts (sorry CompletableFuture)

So a lot of things can go wrong with concurrent programming, so let's check that out.

WHAT CAN POSSIBLY GO WRONG?

*Java is not done

*Major structural changes get a lot of attention by very smart people

*But the interest of system/application programmers are not always represented

*They need you!

*Read those JEPs and project pages

*Try early builds

*Give feedback

*Don't get bamboozled by the surface claims ("millions of fibers")

- =>The payoff for Loom is a better programming model

- =>Same as with j.u.stream